INTRODUCTION TO GOOGLE SEARCH ENGINE OPTIMIZATION

If you own, manage, monetize or support online content through Google Search, this guide is intended for you. You may be the owner of a growing and flourishing company, a webmaster of a dozen sites, a SEO expert in a web agency, or a DIY SEO ninja passionate about the mechanics of Search: this guide is meant for you. If you’re interested in having a complete overview of the fundamentals of SEO according to our best practices, you ‘re in the right place. This guide will not provide any secrets that will instantly place your site first in Google (sorry!), but following the best practices outlined below will hopefully make it easier for search engines to crawl, index and understand your content.

Search Engine Optimization ( SEO) is often about making small changes to parts of your website. When viewed individually, these changes may seem like incremental improvements, but when paired with other enhancements, they may have a significant impact on the user experience and success of your site in organic search results. You ‘re probably already familiar with many of the topics in this guide, because they are essential ingredients for any web page, but you may not make the most of them.

Howdy! Welcome to another SEO CONTENT brought to you by www.websitesdavice.com. Are you ready to know more about Google SEO?

LET’S DETERMINE IF YOU’RE ON GOOGLE

Determine if your site is listed in the Google index-Do a site: search for the home URL of your site. You are in the table when you see results. For example, those results are returned by a search for “site: websitesadvice.com.”

When your site is not in Google-Google crawls billions of pages, certain links will likely be skipped. When our crawlers are missing a site, this is often for one of the following reasons:

- The site isn’t well connected from other sites on the web

- You’ve just launched a new site and Google hasn’t had time to crawl it yet

- The design of the site makes it difficult for Google to crawl its content effectively

- Google received an error when trying to crawl your site

- Your policy blocks Google from crawling the site

SO, HOW TO I GET MY WEBSITE ON GOOGLE?

It’s free and easy to include in Google’s search results; you don’t even need to send your site to Google. Google is a fully automated search engine which uses web crawlers to constantly explore the web, finding sites to add to our index. In addition, the vast majority of sites listed in our results are not submitted manually for inclusion, but are automatically identified and added as we crawl through the web. Learn how Google uses web pages to find, search and help.

Google offers guidelines to webmasters to build a website that is Google friendly. While there is no guarantee that our crawlers will find a particular site, following these guidelines should help make the results of our search appear on your site. Google Search Console provides tools to help you submit your content to Google and monitor how Google Search is performing. Search Console can even send you alerts about critical issues Google encounters with your site, if you want, register with Search Console.

DO I NEED AN SEO EXPERT?

The expert in the SEO (“search engine optimization”) is someone trained to improve the search engine visibility. You can know enough by following this guide to be well on your way to an automated platform. Besides that, you might want to consider hiring a SEO specialist who can help you to audit your pages. It’s a big decision to hire an SEO that can potentially improve the site and save time. Ensure that you analyze the potential benefits of hiring an SEO, as well as the damage that an inept SEO can do to your site. Most SEOs and other companies and consultants offer useful services to website owners including: review of the website content or layout Website development technical advice such as hosting, redirecting, error pages, use of JavaScript content development Management of online business development campaigns Keyword research SEO training Expertise in specific markets and geographies

- Review of your site content or structure

- Technical advice on website development: for example, hosting, redirects, error pages, use of JavaScript

- Content development

- Management of online business development campaigns

- Keyword research

- SEO training

- Expertise in specific markets and geographies

If you are considering hiring a SEO, the better the sooner. A perfect time to hire is when you’re considering redesigning a site, or looking to start a new site. That way, you and your SEO will make sure your site is built from the bottom up to be search engine-friendly. But a good SEO can also help an existing site improve.

NOW, YOU SHOULD HELP GOOGLE FIND YOUR CONTENT

The first step to getting your site on Google is to be sure that Google can find it. The best way to do that is to submit a sitemap. A sitemap is a file on your site that tells search engines about new or changed pages on your site. Google also finds pages through links from other pages. See Promote your site later in this document to learn how to encourage people to discover your site.

MORE IMPORTANTLY, YOU SHOULD TELL GOOGLE WHICH PAGES SHOULDN’T BE CRAWLED

Best Practices

Block the unwanted crawling by using robots.txt for non – sensitive information

A “robots.txt” file tells search engines if they can reach your site, and thus crawl pieces. This file, to be named “robots.txt” is located in your site’s root directory. Pages blocked by robots.txt may still be crawled so you should use a more secure method for sensitive pages.

You may not want to crawl those pages on your site because, if included in the search results of a search engine, they might not be useful to users. Google Search Console has a helpful robots.txt generator to help you create this file if you want to avoid search engines from scanning your pages. Note that you will need to create a separate robots.txt file for that subdomain if your site uses subdomains and you intend to have certain pages not crawled on a different subdomain. For more information about the.txt robots

Avoid:

- Don’t let your internal search result pages be crawled by Google. Users dislike clicking a search engine result only to land on another search result page on your site.

- Allowing URLs created as a result of proxy services to be crawled.

For sensitive information, use more secure methods

Robots.txt isn’t a good or efficient way to block sensitive or confidential content. It only instructs well-behaved crawlers that the pages are not for them, but does not preclude the server from providing those pages to a client requesting them. One reason is that search engines could still reference the URLs that you block (showing just the URL, no title or snippet) if links to those URLs occur somewhere on the Internet (like referer logs). In addition, non – compliant or rogue search engines that don’t understand the Robots Exclusion Standard may disobey your robots.txt instructions. Finally, a curious user could look at the directories or subdirectories in your robots.txt file, and guess the content URL you don’t want to see.

In these situations, if you just want the page to not appear in Google, use the noindex tag, but don’t mind if any user with a connection can get to the page. Nonetheless, for true security, you should use proper authorization methods, such as having a user password, or removing the page entirely from your site.

Help Google (and users) understand your content by letting Google see your page the same way a user does

If Googlebot crawls a website, it should see the page in the same manner as an average user. Often give Googlebot access to the JavaScript , CSS, and image files that your website uses for optimal rendering and indexing. If the robots.txt file on your site disallows the crawling of these things, it directly affects how well our algorithms make your content and index it. This can trigger rankings to be suboptimal.

Recommended action:

- Use the URL Inspection tool. It will allow you to see exactly how Googlebot sees and renders your content, and it will help you identify and fix a number of indexing issues on your site.

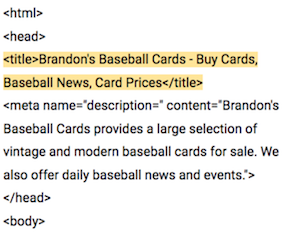

Create unique, accurate page titles

A tag < title > informs both users and search engines what a particular page is about. The < title > tag should be put inside the HTML document section of < head >. A unique title should be created for every page on your site.

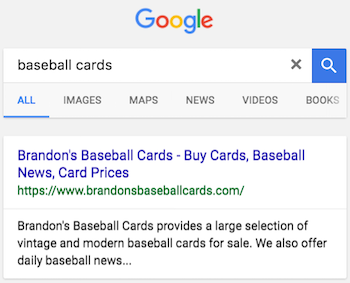

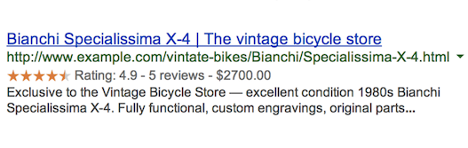

Create good titles and snippets in search results

If your document appears in a search results page, the contents of the title tag may appear in the first line of the results (if you’re unfamiliar with the different parts of a Google search result, you might want to check out the anatomy of a search result video). The title for your homepage can list the name of your website/business and could include other bits of important information like the physical location of the business or maybe a few of its main focuses or offerings.

Best Practices

Accurately describe the page’s content

Choose a title that reads naturally and effectively communicates the topic of the page’s content.

Avoid:

- Choosing a title that has no relation to the content on the page.

- Using default or vague titles like “Untitled” or “New Page 1”.

Create unique titles for each page

Each page on your site should ideally have a unique title, which helps Google know how the page is distinct from the others on your site. If your site uses separate mobile pages, remember to use good titles on the mobile versions too.

Avoid:

- Using a single title across all of your site’s pages or a large group of pages.

Use brief, but descriptive titles

Titles can be both short and informative. If the title is too long or otherwise deemed less relevant, Google may show only a portion of it or one that’s automatically generated in the search result. Google may also show different titles depending on the user’s query or device used for searching.

Avoid:

- Using extremely lengthy titles that are unhelpful to users.

- Stuffing unneeded keywords in your title tags.

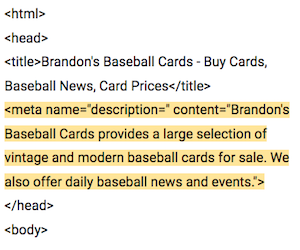

Use the “description” meta tag

A page’s description meta tag gives Google and other search engines a summary of what the page is about. A page’s title may be a few words or a phrase, whereas a page’s description meta tag might be a sentence or two or even a short paragraph. Like the <title> tag, the description meta tag is placed within the <head> element of your HTML document.

What are the merits of description meta tags?

Definition meta tags are essential, as they may be used by Google as snippets for your pages. Notice that we’re saying “could” because if Google does a good job of matching a user’s query, it can choose to use a relevant section of the available text on your page. Adding meta tags for definition to each of your pages is always a good practice in the event that Google cannot find a good set of text to use in the snippet. For your users, the Webmaster Central Blog has informative posts on enhancing snippets with better meta tag definition and better quotes. They also have a helpful article about how to create good titles and snippets from the Help Center.

Best Practices

Accurately summarize the page content

Write a description that would inform as well as inspire users if they saw your meta tag as a snippet in a search result. While a description meta tag does not have a minimum or maximum length for the text, we recommend making sure that it is sufficiently long to be completely displayed in Search (note that users may see different size snippets depending on how and where they are searching), and that it includes all the relevant information that users would need to decide if the page would be useful and relevant for them.

Avoid:

- Writing a description meta tag that has no relation to the content on the page.

- Using generic descriptions like “This is a web page” or “Page about baseball cards”.

- Filling the description with only keywords.

- Copying and pasting the entire content of the document into the description meta tag.

Use unique descriptions for each page

Having a different description meta tag for each page helps both users and Google, especially in searches where users may bring up multiple pages on your domain (for example, searches using the site: operator). If your site has thousands or even millions of pages, hand-crafting description meta tags probably isn’t feasible. In this case, you could automatically generate description meta tags based on each page’s content.

Avoid:

- Using a single description meta tag across all of your site’s pages or a large group of pages.

Use heading tags to emphasize important text

Use descriptive headings to denote important topics, and help create a hierarchical structure for your content, making navigation through your document easier for users.

Best Practices

Imagine you’re writing an outline

Similar to writing an outline for a large paper, put some thought into what the main points and sub-points of the content on the page will be and decide where to use heading tags appropriately.

Avoid:

- Placing text in heading tags that wouldn’t be helpful in defining the structure of the page.

- Using heading tags where other tags like <em> and <strong> may be more appropriate.

- Erratically moving from one heading tag size to another.

Use headings sparingly across the page

Use tags with heading where it makes sense. Too many of a page’s heading tags will make it difficult for users to review the content and decide where one subject ends and another begins.

Avoid:

- Excessive use of heading tags on a page.

- Very long headings.

- Using heading tags only for styling text and not presenting structure.

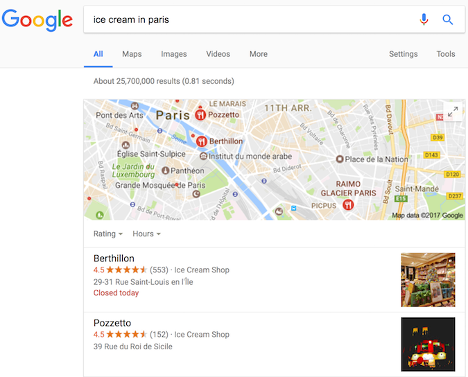

Add structured data markup

Structured data is code that can be applied to the pages of your sites to explain your search engine content, so that they can better understand what’s on your pages. Search engines can use this understanding to use useful (and eye – catching!) ways to show your content in search results. That, in turn , can help attract the right kind of clients to your company.

For example, if you have an online store and mark up an individual product page, that helps us understand that a bike, its price, and customer reviews are featured on the page. For relevant queries, we can show the information in the snippet to search results. We name those “good outcomes.”

You can use it to display relevant results in other formats, in addition to using structured data markup for rich output. For example, if you have a brick-and-mortar store, marking opening hours allows your potential customers to find you exactly when they need you, and let them know if your store is open/closed when they search.

You may mark up other organizations that are important for business:

- Products you’re selling

- Business location

- Videos about your products or business

- Opening hours

- Events listings

- Recipes

- Your company logo, and many more!

We recommend that you use structured data with any of the supported notations markup to describe your content. You can add the markup to the HTML code to your pages, or use tools like Data Highlighter and Markup Helper.

Best Practices

Check your markup using the Rich Results test

Once your content is marked, you can use the Google Rich Results Test to make sure there are no mistakes in the implementation. You can either enter the URL where the content is stored, or copy the actual HTML that includes the markup.

Avoid:

- Using invalid markup.

Use Data Highlighter

If you want to try standardized markup without modifying your site’s source code, you can use Data Highlighter, a free tool built into Search Console that supports a sub – set of content types. If you want the markup code ready to be copied and pasted into your website, try the Markup Helper app.

Avoid:

- Changing the source code of your site when you are unsure about implementing markup.

Keep track of how your marked up pages are doing

The numerous rich result reports in Search Console show you how many pages we have found on your site with a certain form of markup, how many times they have appeared in the search results and how many times users have clicked on them over the past 90 days. That also reveals any errors we have detected.

Avoid:

- Adding markup data which is not visible to users.

- Creating fake reviews or adding irrelevant markups.

Manage your appearance in Google Search results

Furthermore, accurate structured data on your pages makes your page qualify for many exclusive Search results features, including review stars, fancy decorated results and more.

Organize your site hierarchy

Understand how search engines use URLs

Search engines need a unique URL per piece of content to be able to crawl, index, and refer users to that site. Similar content — e.g. different products in a shop — as well as changed content — e.g. adaptations or regional variations— must use separate URLs to display correctly in search.

URLs are generally split into multiple distinct sections:

protocol://hostname/path/filename?querystring#fragment

For example:

https://www.example.com/RunningShoes/Womens.htm?size=8#info

Google recommends the use of https:/ on all websites where appropriate. The hostname is where the website is stored, usually using the same domain name you would use for the email address. Google differentiates between the variants “online” and “non-www” (for example, “www.example.com” or simply “example.com”). We recommend adding both http:/ and https:/ formats, as well as the “online” and “non-www” versions, when submitting your website to Search Console.

Path filename, and query string indicate which content is being accessed from your server. All three parts are case – sensitive, and “FILE” would generate a URL other than “file.” The hostname and protocol are case-insensitive; there would not play a role in upper or lower case.

A fragment (“# info” in this case) generally identifies which section of the page the user is scrolling to. Because the content itself is generally the same irrespective of the fragment, search engines often ignore any fragment used.

A trailing slash after the hostname is optional when referring to the site, because it results in the same content (“https:/example.com/” is the same as “https:/example.com”). A trailing slash would be regarded as a different URL for the path and filename (signaling either a file or a directory), for example, “https:/example.com/fish” is not the same as “https:/example.com/fish/».

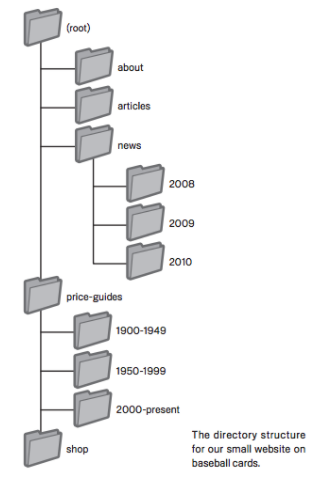

Navigation is important for search engines

Navigating a website is important in helping visitors find the information they want quickly. It can also help search engines understand what content they think is important to the webmaster. While Google provides search results at a page level, Google often likes to have a sense of what role a page plays in the site’s larger picture.

Plan your navigation based on your homepage

Many sites have a home or root page, which for many tourists is generally the most frequented page on the web, and the starting point for navigation. If your site has just a handful of pages, you should know how people go from a general page (your root page) to a page that contains more specific content. Do you have enough pages around a specific topic area that a page detailing those related pages (for example, root page-> related topic listing-> specific topic) would make sense to create? Do you have hundreds of different items to list under several category pages and subcategories?

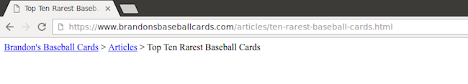

Using ‘breadcrumb lists’

A breadcrumb is a row of internal links at the top or bottom of the page that allows visitors to quickly navigate back to a previous section or the root page. Many breadcrumbs have the most general page (usually the root page) as the first, leftmost link and list the more specific sections out to the right. We recommend using breadcrumb structured data markup when showing breadcrumbs.

Create a simple navigational page for users

A navigation page is a basic page on your website that shows your website’s layout and usually consists of a hierarchical list of the pages on your website. Users are welcome to visit this page if they have problems finding pages on your site. Although search engines will also visit this page to get good crawl coverage of the pages on your site, it’s aimed primarily at human visitors.

Best Practices

Create a naturally flowing hierarchy

Make it as easy as possible for users to walk from general content to the unique content they want on your site. When adding navigation pages makes sense, and effectively integrating them into your internal link structure. Make sure all the pages on your site are accessible through links and you don’t need to use an internal “scan” feature. Link to associated pages, where possible, to allow users to discover similar content.

Avoid:

- Creating complex webs of navigation links, for example, linking every page on your site to every other page.

- Going overboard with slicing and dicing your content (so that it takes twenty clicks to reach from the homepage).

Use text for navigation

Controlling most of the navigation by text links from page to page on your site makes it easier for search engines to crawl around and understand your site. Use “a” elements with URLs as “href” attribute values when using JavaScript to create a page, and generate all menu items under page-load, rather than waiting for user interaction.

Avoid:

- Having a navigation based entirely on images, or animations.

- Requiring script or plugin-based event-handling for navigation.

Create a navigational page for users, a sitemap for search engines

Include for users a simple navigation page for your whole site (or the most important pages, if you have hundreds or thousands). Create an XML sitemap file to ensure search engines discover your site’s new and updated sites, listing all related URLs along with the last changed dates for their primary content.

Avoid:

- Letting your navigational page become out of date with broken links.

- Creating a navigational page that simply lists pages without organizing them, for example by subject.

Show useful 404 pages

Users could sometimes arrive at a page that does not exist on your site, either by following a broken link or by typing in the wrong URL. User experience will be greatly enhanced by developing a custom 404 page that kindly guides users back to the working page of your site. Perhaps your 404 page should have a link back to your root page, and may also have links on your site to popular or related content. Use the Google Search Console to locate the sources of the URLs that cause errors that are “not found.”

Avoid:

- Allowing your 404 pages to be indexed in search engines (make sure that your web server is configured to give a 404 HTTP status code or – in the case of JavaScript-based sites – include a noindex robots meta-tag when non-existent pages are requested).

- Blocking 404 pages from being crawled through the robots.txt file.

- Providing only a vague message like “Not found”, “404”, or no 404 page at all.

- Using a design for your 404 pages that isn’t consistent with the rest of your site.

Simple URLs convey content information

The creation of descriptive categories and filenames for the documents on your website not only allows you to keep your site more organized, it can also provide simpler, more “secure” URLs for those who wish to connect to your content. Extremely long and cryptic URLs that contain few recognizable words can scare visitors.

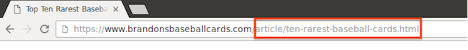

URLs like the one shown in the picture below can be both confusing and unfriendly.

![]()

When the URL is accurate, it can be more useful in different ways, and easily understandable.

Remember also that with any search engine optimization strategy the ultimate goal is to get more visibility and traffic for your business or the content on your site. Look for ways to help your business and site with search engine traffic: don’t just chase after the latest SEO buzzwords or jump whenever Google makes a recommendation that could improve your search rankings while hurting your overall business.

That’s all for an informative introduction about Google SEO. Do you have any questions, suggestions or any experience regarding this? Let’s discuss down in the comments!

Thank you so much and see you all again!

I learn something new and challenging on blogs I stumbleupon everyday.

Hi there, after reading this amazing paragraph i am as well delighted to share my knowledge here with friends.